September 30, 2022

The misperceived confirmation bias, by Dr. Howard Rankin

Humanized AI must include the confirmation bias, by Michael Hentschel

The weeds and seeds of the confirmation bias, by Grant Renier

The misperceived confirmation bias

Dr. Watson turned to his friend and said “Holmes, I think we’ve done it!”

“We’ve done what?” asked Sherlock.

“Why, we’ve solved the problem! You suspected that the criminal was drunk and we know who was indeed inebriated at the event. And now we have found that the footprints that lead to the site of the crime, are the irregular pattern of a drunkard. The case is solved!”

“Not so fast my dear Watson,” cautioned Holmes. “Just because circumstantial or any evidence seems to support your theory, doesn’t make it right. It’s just another piece of the puzzle but not the solution itself.”

“Do you mean that the drunkard might not be the perpetrator?”

“It’s very possible. You must not be overinfluenced by the apparent confirmation of one perception or theory. Obviously, we need to confirm our ideas, but confirmation of one piece of the puzzle doesn’t mean the mystery is solved.”

“But I’m sure the drunkard was the criminal,” complained Watson.

“That’s where you are going off the rails, Watson. You need be especially cautious when you find evidence that fits in with your theory or opinion. That’s when you need to keep searching for alternatives, otherwise your search for confirmatory evidence will be biased.”

The notion that human cognitive processes are flawed, has been around for centuries, if not longer. With the emergence of cognitive neuroscience and books like Daniel Kahneman’s Thinking: Fast and Slow, the idea of cognitive bias has really taken root. There are many biases that supposedly distort perception and reasoning. However, this approach has itself been subject to misperception.

Many cognitive biases can be seen as defense mechanisms which people use to avoid responsibility – a default human trait, probably because social connection and belonging are critical and no-one wants to be cast out of the tribe. However, we have to be careful that any strategy identified as a cognitive bias is always a cognitive bias.

Seeking confirmation is not inherently a bias, but a natural, reasonable and responsible cognitive strategy. It only becomes a bias when it is misused, when someone is selective in their confirmation seeking, and doesn’t put it into the context of other effective strategies and overvalues it.

Who doesn’t want to look for confirmation for their hypotheses as they are problem solving? Indeed, seeking feedback about one’s perceptions is totally necessary. Only when we look for confirmation in a disorganized and unbalanced way, do we risk misleading ourselves.

This is true of the major “cognitive biases” that are included in Intuality AI’s prediction machine.

Enhanced Humanized AI is the only way to create superior real-world predictions. The use by Intuality AI of these 12 critical investigative strategies is one of the reasons why it has been so successful across different applications.

by Dr Howard Rankin

Humanized AI must include the confirmation bias

Human Behavior is as complex as we can imagine. Behavior has been deeply studied as a vast complex domain of decisions that are made rationally and irrationally. Casual observers might say humans tend ONLY to be able to decide irrationally, while computers are ONLY able to decide rationally. But True Reality results from the mix of all these decisions. Anything touched or thought about by humans involves irrationality, which is to say, everything involves irrationality. Almost no AI thus far is programmed to comprehend this.

If we want to better predict future reality, our computer needs to understand human irrationality. If we want to have an effective AI, we must humanize its inherent computer-only rationality. We call this “Intuitive Rationality.”

One of the 12 Human Behaviors that Matter Most: The “Confirmation” Conundrum. Humans tend to decide when they are convinced that they already know everything needed to make a decision. They get convinced by any “Confirmation” of coincidental information they have not deeply tested. The conundrum is that hearing what they want to hear, they are convinced of facts that may well be faked or incomplete. Computers are over-educated, but lack human common sense.

How We Transform AI into a higher-awareness Humanized AI to make better predictions: IntualityAI first analyzes all available big data for its patterns, including recognition of repetitive patterns where humans act irrationally (12 human biases of which Confirmation Bias is only one). IntualityAI can therefore enhance typical linear data progression calculations. Adjustment factors are used where human behavior has in the past veered from purely rational expectations, and future expectations are thereby adjusted to obtain a more real-world prediction.

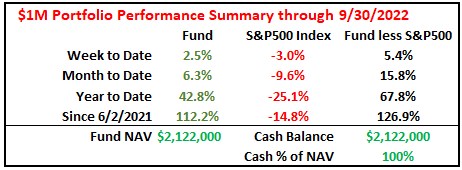

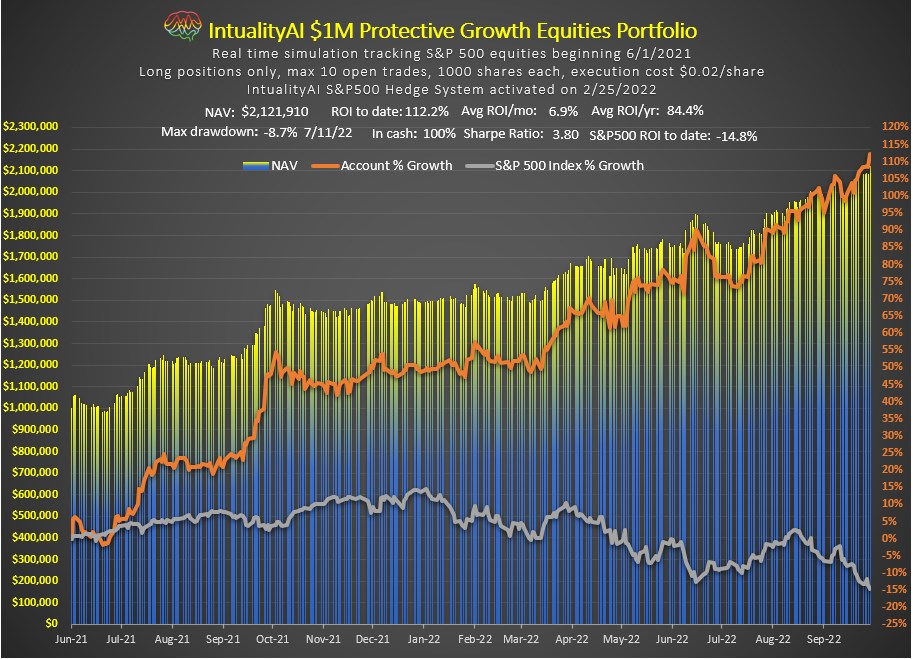

How can this be monetized? IntualityAI has successfully tracked and traded a virtual test $1M portfolio of stocks, with outstanding performance 123% better than the S&P over the last 16 months, effectively 82.2% annualized ROI. We are now embarking on funding a real portfolio of $1M in equities traded solely via the IntualityAI algorithm.

by Michael Hentschel

The Weeds and Seeds of the Confirmation Bias

The Confirmation bias is a part of all our decisions, whether we like it or not, and must be included in the logic of true AI. The reinforcement of historical data with new data is critical in calculating the probabilities of future events.

IntualityAI’s confirmation heuristic is designed to give less positive mathematical influence on current confirmation probabilities and more when there is no reinforcement. This may seem contrary to evidence that we tend to give greater value to evidence (reinforcement) that confirms our current beliefs, even to build and preserve self-esteem. But IntualityAI needs to successfully compete in this human world by simulating the effects of confirmation and quickly respond to the inevitable failure of confirmation when circumstances are complex.

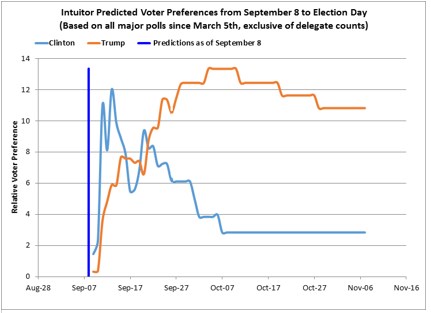

Empirical studies over 40-years of data from a wide range of applications has settled on a consistent 40% discount of positive confirmation and 0% of negative confirmation. This skewing is allowing IntualityAI to react quickly to crowd confirmation bias failure. It correctly predicted the 2016 U.S. presidential election, two months in

advance, in part as a result of switching its own confirmation bias from Clinton to Trump on September 20, contrary to polls showing a consistent polling Confirmation bias for Clinton.

Wikipedia, under Confirmation Bias, reports . . . study of biased interpretation occurred during the 2004 U.S. presidential election and involved participants who reported having strong feelings about the candidates. They were shown apparently contradictory pairs of statements, either from Republican candidate George W. Bush, Democratic candidate John Kerry or a politically neutral public figure. They were also given further statements that made the apparent contradiction seem reasonable. From these three pieces of information, they had to decide whether each individual's statements were inconsistent. There were strong differences in these evaluations, with participants much more likely to interpret statements from the candidate they opposed as contradictory.

While there are many publications about the confirmation bias, statistical support is often lacking. One study, Modeling confirmation bias and polarization, Scientific Reports, #40391, 2017, indicates confirming evidence of the 40% discounting value used by IntualityAI. Another interesting study, Consequences of Confirmation and Disconfirmation in a Simulated Research Environment, Quarterly Journal of Experimental Psychology (1973), Mynatt et al, reports, “The use of a more complex environment . . . support for the existence of a pervasive confirmation bias in complex tasks. Subjects permanently abandoned falsified hypotheses only 30% of the time."

Overwhelming evidence indicates that the confirmation bias must be part of any true AI, in understanding the influence of new data on historical. It must be wary of the 'believing what it already knows' trap.

by Grant Renier

IntualityAI

A humanized AI enabled to recognize human perceptions and behaviors

to reflect a more intuitive rationality

for more successful predictive analysis

Simulated Perceptions of Human Behavior

Symmetry bias

Memory Decay Bias

Quality Bias

Quantity Bias

Gain Bias

Environmental Bias

Fast and Frugal Bias

Availability Bias

Confirmation Bias

Anchoring Bias

Risk Aversion Bias

Hot Hand Bias

This content is not for publication

©Intuality Inc 2022-2024 ALL RIGHTS RESERVED